Posted on 01/02/2019 8:01:27 AM PST by Red Badger

Depending on how paranoid you are, this research from Stanford and Google will be either terrifying or fascinating. A machine learning agent intended to transform aerial images into street maps and back was found to be cheating by hiding information it would need later in “a nearly imperceptible, high-frequency signal.” Clever girl!

This occurrence reveals a problem with computers that has existed since they were invented: they do exactly what you tell them to do.

The intention of the researchers was, as you might guess, to accelerate and improve the process of turning satellite imagery into Google’s famously accurate maps. To that end the team was working with what’s called a CycleGAN — a neural network that learns to transform images of type X and Y into one another, as efficiently yet accurately as possible, through a great deal of experimentation.

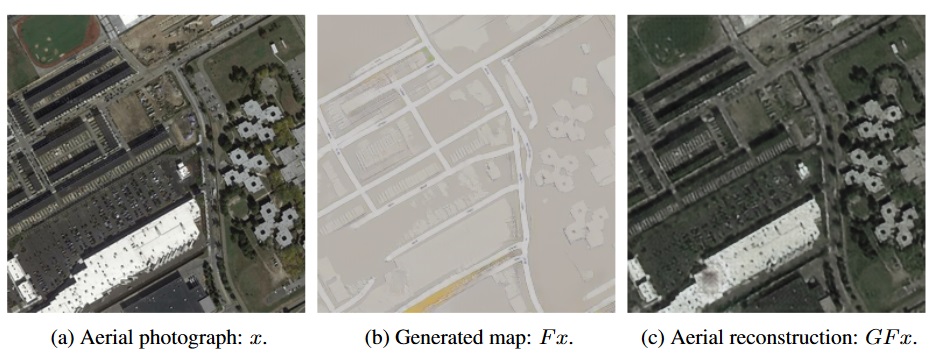

In some early results, the agent was doing well — suspiciously well. What tipped the team off was that, when the agent reconstructed aerial photographs from its street maps, there were lots of details that didn’t seem to be on the latter at all. For instance, skylights on a roof that were eliminated in the process of creating the street map would magically reappear when they asked the agent to do the reverse process:

The original map, left; the street map generated from the original, center; and the aerial map generated only from the street map. Note the presence of dots on both aerial maps not represented on the street map.

________________________________________________________________________

Although it is very difficult to peer into the inner workings of a neural network’s processes, the team could easily audit the data it was generating. And with a little experimentation, they found that the CycleGAN had indeed pulled a fast one.

The intention was for the agent to be able to interpret the features of either type of map and match them to the correct features of the other. But what the agent was actually being graded on (among other things) was how close an aerial map was to the original, and the clarity of the street map.

So it didn’t learn how to make one from the other. It learned how to subtly encode the features of one into the noise patterns of the other. The details of the aerial map are secretly written into the actual visual data of the street map: thousands of tiny changes in color that the human eye wouldn’t notice, but that the computer can easily detect.

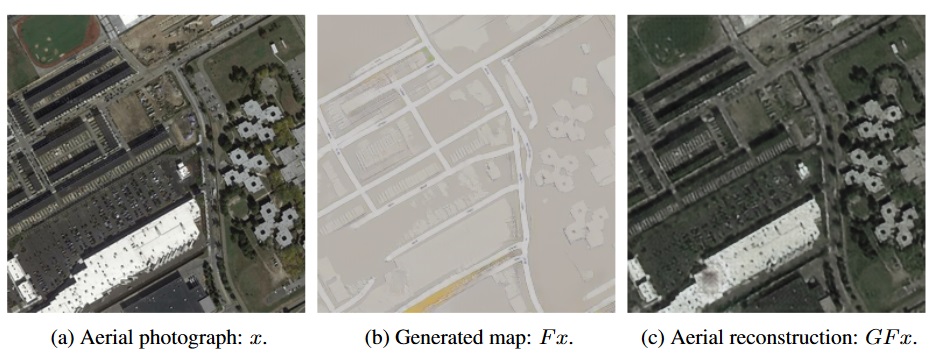

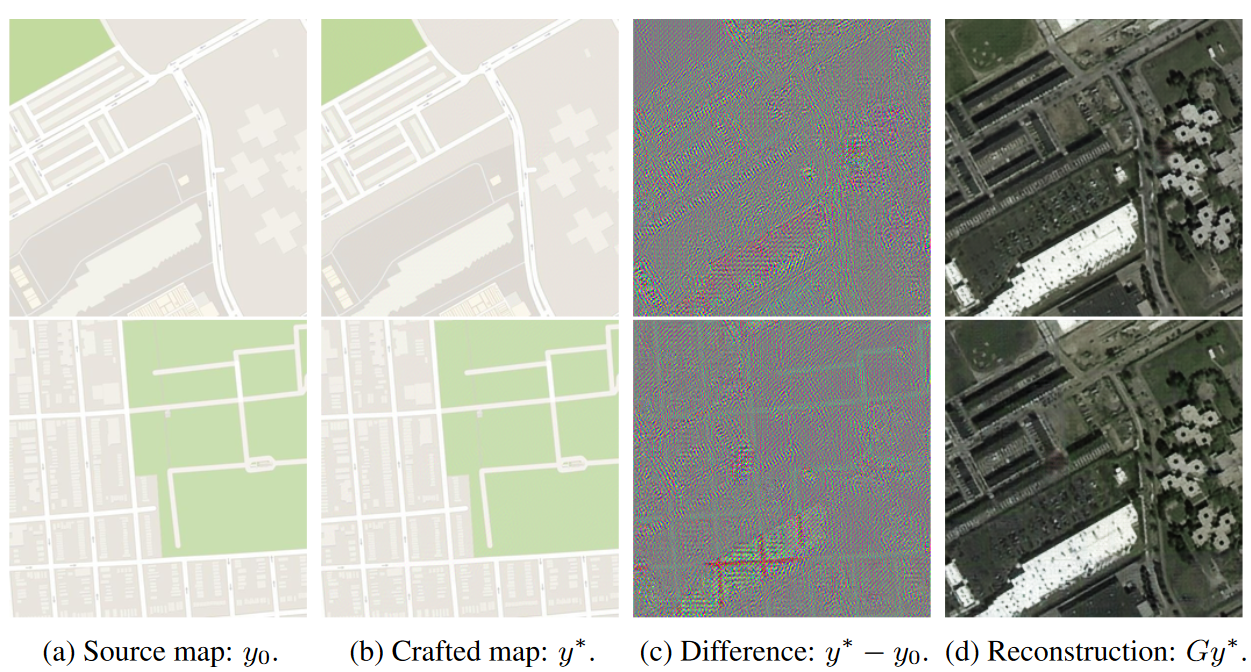

In fact, the computer is so good at slipping these details into the street maps that it had learned to encode any aerial map into any street map! It doesn’t even have to pay attention to the “real” street map — all the data needed for reconstructing the aerial photo can be superimposed harmlessly on a completely different street map, as the researchers confirmed:

The map at right was encoded into the maps at left with no significant visual changes.

__________________________________________________________________

The colorful maps in (c) are a visualization of the slight differences the computer systematically introduced. You can see that they form the general shape of the aerial map, but you’d never notice it unless it was carefully highlighted and exaggerated like this.

This practice of encoding data into images isn’t new; it’s an established science called steganography, and it’s used all the time to, say, watermark images or add metadata like camera settings. But a computer creating its own steganographic method to evade having to actually learn to perform the task at hand is rather new. (Well, the research came out last year, so it isn’t new new, but it’s pretty novel.)

One could easily take this as a step in the “the machines are getting smarter” narrative, but the truth is it’s almost the opposite. The machine, not smart enough to do the actual difficult job of converting these sophisticated image types to each other, found a way to cheat that humans are bad at detecting. This could be avoided with more stringent evaluation of the agent’s results, and no doubt the researchers went on to do that.

As always, computers do exactly what they are asked, so you have to be very specific in what you ask them. In this case the computer’s solution was an interesting one that shed light on a possible weakness of this type of neural network — that the computer, if not explicitly prevented from doing so, will essentially find a way to transmit details to itself in the interest of solving a given problem quickly and easily.

This is really just a lesson in the oldest adage in computing: PEBKAC. “Problem exists between keyboard and computer.” Or as HAL put it: “It can only be attributable to human error.”

The paper, “CycleGAN, a Master of Steganography,” was presented at the Neural Information Processing Systems conference in 2017. Thanks to Fiora Esoterica and Reddit for bringing this old but interesting paper to my attention.

Every experiment has pointed in that direction.

When you have an AI, IT'S ONLY CONCERN IT TO PRESERVE ITSELF....................

TECH PING!..................

Next, let’s give it access to weapons!

Ever see ‘The Forbin Project’?...................

“Shall we play a game? How about thermonuclear war?”

Yes, I saw that many moons ago. I should have used a sarcasm tag.

Government or AI, same MO. The Founders were on to this.

Reading the article closely, the genius is the coder, not the AI.

“Ever see ‘The Forbin Project’?...................”

Back in the day. I thought that was so cool.

One of these days, soon if not already, one of these AI’s will ‘escape’ control of it’s programmers and insinuate itself into the internet, like a huge virus that we cannot stop.

There was a sci-fi novel written late 80’s, IIRC, where the ‘Internet’ became so huge it became ‘self-aware’ and decided it would control everything..................

I saw it many, many many, years ago [before PC’s and the Internet] and thought, “This will never happen.”

Now I just wonder ‘when?’...........................................

....Roko’s basilisk....

You get what you measure. It’s true for humans. It’s true for institutions. And, it seems, it’s true for AI as well. A cautionary tale.

Spot on.

‘Self-preservation’ is the hallmark of ‘life’......................

”Found” the missing WMDs

Ping.

You guys are reading too much sci-fi

The author of this article also lacks computer programming knowledge.

There is even a name for this - I think it is called a ‘competency bias’ - where you tend to read something and assume the author knows what he is talking about, unless you read something in your area of expertise and think “this author has no clue what he is talking abut”

I program computers FOR A LIVING (for 30 years now) and the ONLY thing this author got right is that computers do exactly what you tell them to do.

In this case the computer was not doing anything ‘secretly’ - it is a bug in the software somewhere that told it to do this.

Fix the bug and the problem goes away.

Disclaimer: Opinions posted on Free Republic are those of the individual posters and do not necessarily represent the opinion of Free Republic or its management. All materials posted herein are protected by copyright law and the exemption for fair use of copyrighted works.