Posted on 05/23/2009 3:12:02 PM PDT by betty boop

An excerpt from

ROBERT ROSEN: THE WELL POSED QUESTION AND ITS ANSWER — WHY ARE ORGANISMS DIFFERENT FROM MACHINES?

By Donald C. Mikulecky

Department of Physiology

Medical Campus of Virginia Commonwealth University

The Well Posed Question and Its Answer

Science, perception and measurement: The role of the modeling relation

In order to be able to deal with some very confusing issues, it is necessary to formulate just what it is we think we are doing when we carry out this function called “science.” In a very real sense what we mean by science is the ultimate version of what humans do quite regularly, namely the perception of their world. The “perception of the world” is merely the way humans turn sensory information into awareness. What is that all about? Here's one idea that will serve the purpose for this discussion (Fischler and Firschein, 1987, 233)

“No finite organism can completely model the infinite universe, but even more to the point, the senses can only provide a subset of the needed information; the organism must correct the measured values and guess at the needed missing ones.”... “Indeed, even the best guesses can only be an approximation to reality — perception is a creative process.”This simple observation is fraught with meaning. So much meaning that it is worth examining its implication in some detail.

The traditional view of science: the role of measurement

Science is the way we have developed to avoid our perception’s being “creative” in the above sense. Science is a creative endeavor, but the creativity must not cloud our sensation of the world in any way. In order to accomplish this we have developed a methodology that is supposed to prevent our minds from tampering with the sensory information. We call this measurement. Often the methodology that insures this “objective” view of the world is called the scientific method. It should be clear that our notion of objectivity is intimately associated with this concept.

Rosen's treatment of measurement

Since Rosen devoted at least an entire book to this topic (Rosen, 1978), it will be necessary to give a summary here. The process of measurement is something Rosen saw as related to a number of other important concepts that will be involved in this development. Along with measurement are recognition, discrimination, and classification. It is impossible (even if desirable to some) to reduce the issue of measurement to something independent of these other factors as we shall see.

Two propositions are axiomatic in the formalization of the role of measurement in our perception. Bear in mind that what is being developed here is a way of dealing with the traditional view of science.

PROPOSITION 1: “The only meaningful physical events which occur in the world are represented by the evaluation of observables on states.”

PROPOSITION 2: “Every observable can be regarded as a mapping from states to real numbers.”

Rosen warns us that the consequences of adopting these propositions as a mode of operation are very profound. They are, however, the kind of price science is willing to pay for its claim to be able to minimize the role of the conscious mind in the perception of sensory information. It should be clear that the act of measurement is an abstraction. We will return to this point shortly. The trade off is in the belief that, by making this abstraction, the “world” has qualities which, when measured properly, are common to all objective observers. A quote from Fundamentals of Measurement sums it all up very well:

“It is essential to realize at this point that the formalism to be developed, although we cast it initially primarily in the framework of natural systems, is in fact applicable to any situation in which a class of objects is associated with real numbers, or in fact classified or indexed by any set whatever. It is thus applicable to any situation in which classification, or recognition, or discrimination is involved; indeed, one of the aims of our formalism is to point up the essential equivalence of the measurement problem in physics with all types of recognition or classification mechanisms based on observable properties of the objects being recognized or classified.”

The modeling relation: how we perceive

The modeling relation is based on the universally accepted belief that the world has some sort of order associated with it; it is not a hodge-podge of seemingly random happenings. It depicts the elements of assigning interpretations to events in the world . The best treatment of the modeling relation appears in the book Anticipatory Systems (Rosen, 1985, pp 45–220). Rosen introduces the modeling relation to focus thinking on the process we carry out when we “do science.” In its most detailed form, it is a mathematical object, but it will be presented in a less formal way here. It should be noted that the mathematics involved is among the most sophisticated available to us. In its purest form, it is called “category theory” [Rosen, 1978, 1985, 1991]. Category theory is a stratified or hierarchical structure without limit, which makes it suitable for modeling the process of modeling itself.

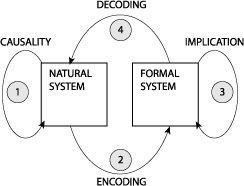

Figure 1. The modeling relation.

Figure 1 represents the modeling relation in a pictorial form. The figure shows two systems, a natural system and a formal system related by a set of arrows depicting processes and/or mappings. The assumption is that when we are “correctly” perceiving our world, we are carrying out a special set of processes that this diagram represents. The natural system is something that we wish to understand. In particular, arrow 1 depicts causality in the natural world. This idea will need some additional explanation further on. On the right is some creation of our mind or something our mind uses in order to try to deal with observations or experiences we have. The arrow 3 is called “implication” and represents some way in which we manipulate the formal system to try to mimic causal events observed or hypothesized in the natural system on the left. The arrow 2, is some way we have devised to encode the natural system or, more likely select aspects of it (having performed a measurement as described above), into the formal system. Finally, the arrow 4 is a way we have devised to decode the result of the implication event in the formal system to see if it represents the causal event’s result in the natural system. Clearly, this is a delicate process and has many potential points of failure. When we are fortunate to have avoided these failures, we actually have succeeded in having the following relationship be true:

1 = 2 + 3 + 4.

When this is true, we say that the diagram commutes and that we have produced a model of our world.

Please note that the encoding and decoding mappings are independent of the formal and/or natural systems. In other words, there is no way to arrive at them from within the formal system or natural system. This makes modeling as much an art as it is a part of science. Unfortunately, this is probably one of the least well appreciated aspects of the manner in which science is actually practiced and, therefore, one which is often actively denied. It is this fact, among others, which makes the notion of objectivity as defined above have a very shaky foundation. How could such a notion become so widely accepted?

The Newtonian Paradigm and the modeling relation

Traditional science as described above is the result of many efforts, yet it has a core set of beliefs underlying it which Rosen refers to as The Newtonian Paradigm. There is no strict definition of what this is, but it is the entire attitude and approach that arises after Newton introduced his mechanics, especially, his mathematical approach. It certainly embodies the ideas of Descartes and the heliocentrists, for example. It also embodies all of the changes brought about by quantum mechanics. It is so much what modern science is that it could almost be used as a synonym. For these reasons, it has had a profound effect on our perception. It is so powerful a thought pattern that it has seemed to make the modeling relation superfluous. For The Newtonian Paradigm, all of nature encodes into this formal system and then can be decoded. All our models come from this one largest model of nature. In the modeling relation, the formal system lies over the natural system and the encoding and decoding are masked so that the formal system is the real world. The fact that this is not the case is far from obvious to most. The task then, is to understand why.

Putting it all together: the modeling relation is the key

Rosen calls the results of our sensory experiences as they manifest themselves in our awareness percepts. If all we did were to use measurement to objectively become aware of what our senses pick up, the situation would be simple. We would be like a piece of magnetic tape or computer memory filing away this information as it comes in. The key word in the definition of percept is awareness. There is more to that awareness than a mere entering into memory. The first thing we would have to do, even to merely file the information correctly, is to discriminate and classify. In short, we form relations between percepts. What is fascinating about this is the fact that these relationships between percepts can be matched by relationships between objects used in the formal system. Here is the place where semiotics and other aspects of our thought process get mixed into the process in an irreducible way (Dress, 1998,1999). [Itals added for emphasis in this passage.]

The confusion that arises from the failure to recognize this process at work is immense. Rosen’s whole concept of the modeling relation is the explanation for why words like complexity and emergence have become so popular. The suppression of awareness of the process by the Newtonian Paradigm resulted in some real problems, surprises, and errors. It was not until there was widespread recognition, consciously or unconsciously, that this paradigm was inadequate that these words became widely talked about. The world as modeled by the Newtonian Paradigm was but one possible picture of the world. Rosen named this world the world of simple systems or mechanisms.

There is another world, namely the one containing the natural systems we seek to understand, which cannot be totally captured by the Newtonian model. This world, in fact, cannot be captured by any number of formal systems except in the limit of all such systems. The name of this world is the world of the complex. Emergence then is the phenomenon of being surprised when the real world doesn’t conform to the simple model, in other words, the discovery of its complexity. Since the entire real world is complex, discussions of degrees of complexity refer to the nature and number of formal systems being used to create models within the modeling relation. Unless this is realized, the amount of confusion generated trying to classify things by their complexity can be immense. There are many other definitions of complexity (Horgan, 1996) that exemplify this confusion.

Given the modeling relation and the detailed structural correspondence between our percepts and the formal systems into which we encode them, it is possible to make a dichotomous classification between various models of the real world. These models are either simple mechanisms or complex systems. It then becomes possible to formulate the “what is life” question in an entirely new way, one which leads immediately to an answer.

Complex systems and machines: why are they different?

The answer to this question is implicit in the discussion of the modeling relation above. In order to make it explicit, there are some very important epistemological prerequisites that must be accepted. This acceptance may be for the sake of the argument or it may be a total change in direction for anyone seeking to do science in the future. The case will be made systematically.

What is a machine?

The discussion of the modeling relation established that the world of the Newtonian Paradigm is a world of simple mechanisms or machines. As Rosen began to apply this idea to the world, he saw that it had an extremely general categorical application. To say it as concisely as possible, this world was the world described by Church’s Thesis. In other words, it is a totally syntactic world, one that can be constructed by algorithms and simulated. It has a largest model from which all other models can be derived. Its models have the nature that analytic models and synthetic models are the same. This leads to their reducibility, the whole is merely the sum of their parts. The machine which becomes a prototype of this general description is the Universal Turing Machine. Thus all of computer simulation, Artificial Life and Artificial Intelligence are part of this world. The fact that these are not part of the world of complex systems is directly contradictory to the claims being made by most that have espoused the “new science” of complexity. Church’s Thesis says that all effective systems are computable. Rosen’s work says that Church’s thesis is false. There is no middle ground here. The difference is one of profound epistemological significance. There is still another distinction that must wait until the subject of causality and entailment is discussed. For it is in that discussion that the most profound epistemological change will be realized. Before delving into that matter we will compare complex systems to machines.

What is a complex system and why is a complex system different from a machine?

A complex system falls outside the formalism called the Newtonian Paradigm. That is not to say that complex systems cannot be seen as machines for limited kinds of analysis. This is, in fact, what traditional science does. Using Rosen’s general characteristics to separate the two kinds of objects, we see that complex systems contain semantic aspects which cannot be reduced to syntax. Therefore they are not simulatable even though, when viewed as machines, the machine model is simulatable. They have no largest model from which all other models can be derived. This is simply because complex systems, by their very nature, require multiple distinct ways of interacting with them to capture their qualities. Their models are now distinct. Analytic models, which are expressed mathematically as direct products of quotient spaces are no longer equivalent to synthetic models which are built up from disjoint pieces as direct sums. Using this formulation, every synthetic model is an analytic model, but there are analytic models which are not synthetic models. In other words, these analytic models are not reducible to disjoint sets of parts. This is a most profound distinction and requires some elaboration, for in it lies the essence of the failure of reductionism. In the machine, each model analytic or synthetic, is formulated in terms of the material parts of the system. Thus any model will be reducible and can be reconstructed from its parts.

This is not the case in a complex system. There are certain key models which are formulated in an entirely different way. These models are made up of functional components which do not map to the material parts in any one-to-one manner. The functional component itself is totally dependent on the context of the whole system and has no meaning outside that context. This is why reducing the system to its material parts loses information irreversibly. This is a cornerstone to the overall discovery Rosen made. It captures a real difference between complexity and reductionism which no other approach seems to have been able to formulate. This distinction makes it impossible to confuse computer models with complex systems. It also explains how there can be real “objective” aspects of a complex system that are to be considered along with the material parts, but which have a totally different character. Finally, this distinction between functional components and parts can be realized with an appropriate formalism. This formalism is called Relational after Rashevsky's Relational Biology (Rashevsky, 1954). [Itals added for emphasis in this passage.]

* * * * * * *

End of excerpt. Read at the above link for details of Rosen’s profoundly important (it seems to me, FWIW) insights.

Scientists who may be inclined to resist this new direction (which is tantamount to putting the formal and final causes which Francis Bacon banished from the scientific method back into the mix) perhaps need to be reminded that recent developments in the biosemiotics field (not to mention complexity and information science) increasingly point to the idea that meaning (formal cause) and purpose (final cause) really do operate in Nature. In Rosen, the semiotics (also called semantics) — the science of definition, or meaning — belongs to the “formal” system. The material system expresses the “syntax” — or “rules of the grammatical road” (so to speak) that express the meaning of the formal system, particularly as it refers to the question, “What is Life?”

Please go to the above link for an edifying, fascinating discussion of these issues that are rising to the fore in the natural sciences.

I found Mikulecky's article an absolutely astounding and valuable read — FWIW!

What do you think?

I think its to much reading for a Saturday afternoon

Why is air different than dirt?

Who is Spain?

Organisms are mushy and machines are not.

read later

Yikes!

This is a heck of a read! Perhaps you can distill the point of the article?

Is this sort of like mortal man trying to explain the immortal? Can we ever understand creation? I don’t think so, but then again I could be wrong for I am just a mortal man. :-)

Because (intelligent) organisms build machines and not the other way around.

Oh, that one is easy. Answer: Because we say it is. Plus we have lots of "science" that can "prove" it. Got it?

And they aren’t tasty when BBQ’d.

Does this mean SUV’s have a right to life?

What is an organism? Sounds like the question he is really asking is why is life or the life force different from a machine. Its an odd question to me.

It may become one of the most important and hotly contested issues of this century.

I would answer the question with a rift on the temporal nature of each ... machines are stuck in linear time whereas organisms (the soul; not the spirit, the soul, as in all living things; we humans have a spirit component, too) exist in planar time and use linear temporal aspects for expression in linear time.

They also taste different when mixed in the blender.

lol — your comment follows MHGinTN’s perfectly.

Seriously, among other things Rosen's work "comforts us" that Cyberdyne's intelligent yet malignant robots can be safely consigned to the realm of science fiction, and need not be entertained as a possibility capable of realization in any real human future.

Unless they find a way (are programmed by their designers) to be originators and willing agents of "formal causes." I don't know how it is even possible for such a thing to be accomplished — but the AI folks around here might have some ideas about that. And if they do, I hope they will weigh in with their insights here, soon.

I further acknowledge this subject matter deals with an entirely open question at this point. But a potentially immensely fruitful one. And I can tell you that there are scientists who appreciate Rosen's insights, and are now following his lead....

For instance, see an article by Kineman, J.J. and Kineman, J.R., "Life and Space-Time Cosmology." It was presented at the Proceedings of the 44thAnnual Meeting of the International Society for the Systems Science. Toronto, Canada. ISSS, in 2000. Unfortunately, it does not seem to be available at their site. (Why it is so difficult to locate on-line is beyond me.) But a friend who attended passed along a copy of the presentation, in Word format. If you'd like to see it, give me a yell via private FReepmail.

Disclaimer: Opinions posted on Free Republic are those of the individual posters and do not necessarily represent the opinion of Free Republic or its management. All materials posted herein are protected by copyright law and the exemption for fair use of copyrighted works.