Apple-1

Posted on 06/09/2020 12:36:32 PM PDT by Swordmaker

Apple-1

I loaded up 4 different games/programs, set each one with a different resolution, reduced their sizes so all 4 would run on the screen at the same time. You could hear the sound from each game at the same time.

Next I inserted a disc in all 3 floppy drives and formatted all 3 at the same time.

Not to be outdone I loaded and ran a C64 program, and a PC program and they all run.

My friend just stood there shaking his head.

These processors are based on ARM architecture. The latest version of these have tested more powerful and faster than the Intel processors in the lowest priced MacBook Pro, while using less energy.

—

This is going to get interesting. Thermal management in current machines takes a lot of design effort; fans, coolers, wasted battery. If you can use an ARM chip and it does’t need any special cooling, the design of laptops and desktop machines gets way simpler.

My 2018 Mac mini with the 6 Core i7 runs hot, I have seen the cores hit 212 F more than once.

My 2018 Mac mini with the 6 Core i7 runs hot, I have seen the cores hit 212 F more than once.

—

Thermal throttling is an issue with a lot of computers. They spec out with this fantastic multi-gigahertz rate, until they get as hot as a toaster, then they throttle back to prevent a melt-down.

Been watching entertaining videos on YouTube from Linus Tech Tips, many of which revolve around how gaming PCs are cooled. I swear some of the CPU coolers on those machines look like they took the radiator out of a 57 Chevy.

Thank you!

Ed

Its at least 4” square, and at least an inch thick, and I can install a fan on both sides. It stands up from the motherboard several inches.

The GPU has 2 of everything, processors, heatsinks, and fans.

It doesn't have any cooling problems.

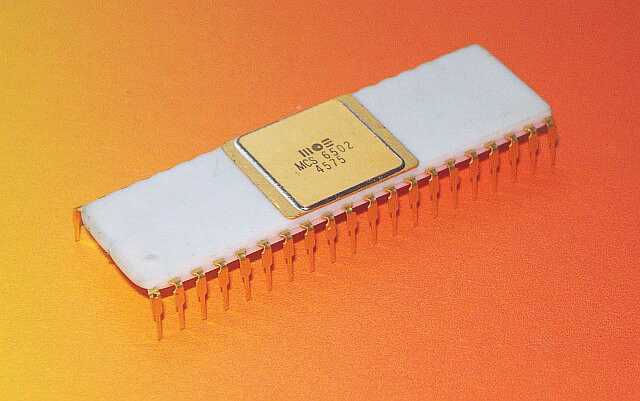

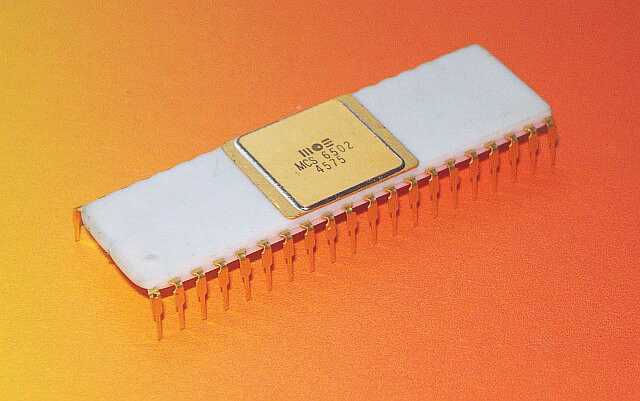

Formerly PowerPC, Motorola 68K before that. ...if I remember correctly.

I remember doing demos like that. I, at one time had more hard drive storage connected to my Amiga than the headquarters of the Bank of California had downtown!

The Copper chip allowed changing the screen resolution on the fly, so it was possible to have different sections of the monitor screen displaying at different resolutions. THAT ALONE, blew away most tech people.

I started programming on a TRS80 CoCo @ school. My parents got me a CoCo2 when I was 7 ... I learned a lot from that machine. I used that up until 1986 when my dad surprised me with a Commodore 64. I used that up until 1991. I still have both of those :-). I remember getting a disk drive, speech synthesizer, and one of those multi-cart expansion things for the CoCo for $50 when a local Radio Shack was clearing that stuff out.

The C64 is an awesome machine. I’d love to know how many careers that breadbox launched :-). While the CoCo was a better platform for education (and had a better 6809 processor), more people were familiar with the C64 ... I learned basic hardware interfacing and other stuff from a lot of local users. I also remember using “Super C Compiler” on the C64 ... it was total garbage, but it introduced me to the language I still use to this day for writing SW (I mostly design logic for “big” FPGAs).

I couldn’t contain myself the first time I saw an Amiga ... yeah, I’m a nerd :-). Looking back, I cannot imagine how that machine never caught on here in the USA. The bang for the buck that you got from an Amiga was off the charts. It had incredible AV capabilities and an ahead of its time OS.

I’ve started digging into an Atari 800 and Atari ST that I found on the cheap (both needed repaired, but it was easy power supply related issues). I like the 800’s graphics system a bit more than the C64, but the audio (POKEY) doesn’t hold a candle to the SID :-).

I can’t see how the ST could outperform the Amiga :-). They’re very similar architectures, but the Amiga’s AV chipset blew it out of the water.

I could be wrong however I'd swear I've read in the last few years that Apple was making their own processor (again) and was moving away from Intel.

None of this is a surprise, is it?

Yep. My first serious code was extensions to the Tandy Color Computer ‘Disk Extended Color Basic’ ROM written in Moto 6809 assembler with the ROM copied to the upper 32K of the 64K RAM memory map. Oh man, I miss the old (Early 1980s) days.

I think IBM wanted Apple to pay for the development costs of the G5. Jobs would have none of it.

So they shlepped out a version of the dual core Power4 which retained the power consumption of a server CPU because it was less work?

That ... actually kinda makes sense.

One thing that’s not widely known is that NeXT (developer of Nextstep, the predecessor of macOS) supported multiple CPU architectures via “fat binaries”, essentially bundling machine code for all supported processors into a single file.

All the Apple development tools can easily support this, and since Apple has experience switching architectures already (PowerPC -> Intel) I’m pretty sure they’ll wait until all is in place before releasing hardware.

Expect for this to initially be the lower end systems (Macbook, Macbook Air, Mac Mini) and then it will “filter up” as Apple develops more powerful chips with more cores.

Google has developed ARM chips that compete very favorably with Intel Xeon on the high end, so it’s very possible that someday even the Mac Pro will go ARM.

Interesting times, I never thought I’d see Apple migrate away from Intel, but Intel has been having a real tough time the last few years.

No, it isn’t. However, I suspect there is going to be a two tier Mac Lineup for a while. The MacPro will likely be Xeon for sometime, for example.

Disclaimer: Opinions posted on Free Republic are those of the individual posters and do not necessarily represent the opinion of Free Republic or its management. All materials posted herein are protected by copyright law and the exemption for fair use of copyrighted works.