Thu 01 Oct 2009 10:48:34 AM PST · by Ernest_at_the_Beach · 13 replies · 273+ views

HPCwire ^ | September 29, 2009 | Michael Feldman, HPCwire Editor

Posted on 10/02/2009 9:42:39 AM PDT by Ernest_at_the_Beach

Oak Ridge National Laboratories may not be the first customer that Nvidia will have for its new "Fermi" graphics processor, which was announced yesterday, but it will very likely be one of the largest customers.

Oak Ridge, one of the giant supercomputing centers managed and funded by the US Department of Energy to do all kinds of simulations and supercomputing design research, has committed to using the GPU co-processor variants of the Fermi chips, the kickers to the current Tesla GPU co-processors, in a future hybrid system that would have ten times the floating point oomph of the fastest supercomputer installed today.

Depending on the tests you want to use, the most powerful HPC box in the world is either the Roadrunner hybrid Opteron-Cell massively parallel custom blade box made by IBM for Los Alamos National Laboratory, or the Jaguar massively parallel XT5 machine at Oak Ridge, which uses only the Opterons to do calculations.

The Roadrunner machine relies on the Cell chips, which are themselves a kind of graphics processor with a single Power core linked into it, to do the heavy lifting on floating point calculations. The compute nodes in the Roadrunner are comprised of a two-socket blade server using dual-core Opteron processors running at 1.8GHz.

Advanced Micro Devices has six-core Istanbul Opterons in the field that are pressing up against the 3GHz performance barrier. But shifting to these faster x64 chips would not radically improve the overall performance of the Roadrunner machine.</p

Each Opteron blade uses HyperTransport links out to the PCI-Express bus to link to two dual-socket Cell blades.

(Excerpt) Read more at theregister.co.uk ...

Interesting article ...

Petascale Computing on Jaguar

The National Center for Computational Sciences (NCCS), sponsored by the Department of Energy’s (DOE) Office of Science, manages the 1.64-petaflop Jaguar supercomputer for use by scientists and engineers solving problems of national and global importance. The new petaflops machine will make it possible to address some of the most challenging scientific problems in areas such as climate modeling, renewable energy, materials science, fusion and combustion. Annually, 80 percent of Jaguar’s resources are allocated through DOE’s Innovative and Novel Computational Impact on Theory and Experiment (INCITE) program, a competitively selected, peer reviewed process open to researchers from universities, industry, government and non-profit organizations.

Through a close, four-year partnership between ORNL and Cray, Jaguar has delivered state-of-the-art computing capability to scientists and engineers from academia, national laboratories and industry. The XT system has grown in strength through a series of advances since being installed as a 25-teraflop XT3 in 2005. By early 2008 Jaguar was a 263-teraflop Cray XT4 able to solve some of the most challenging problems that could not be solved otherwise. In 2008 Jaguar was expanded with the addition of a 1.4-petaflop Cray XT5. The resulting system has over 181,000 processing cores connected internally with Cray’s Seastar2+ network. The XT4 and XT5 parts of Jaguar are combined into a single system using an InfiniBand network that links each piece to the Spider file system.

Throughout its series of upgrades, Jaguar has maintained a consistent programming model for the users. This programming model allows users to continue to evolve their existing codes rather than write new ones. Applications that ran on previous versions of Jaguar can be recompiled, tuned for efficiency, and then run on the new machine.

Jaguar is the most powerful computer system for science with world leading performance, more than three times the memory of any other computer, and world leading bandwidth to disks and networks. The AMD Opteron processor is a powerful, general purpose processor that uses the X86 instruction set which has a rich set of applications, compilers, and tools. Jaguar has hundreds of applications that have been ported and run on the Cray XT system, many of which have been scaled up to run on 25,000 to 150,000 cores. Jaguar is ready to take on the most challenging problems for the world.

25C3: Hackers completely break SSL using 200 PS3s |

That doesn’t look like a basement operation....

*************************************************

Nvidia fires off Fermi, pledges radical new GPUs

*******************************EXCERPT*****************************

Three billion-transistor HPC chip, anyone?

1st October 2009 11:18 GMT

Nvidia last night introduced the new GPU design that will feed into its next-generation GeForce graphics chips and Tesla GPGPU offerings but which the company also hopes will drive it ever deeper into general number crunching.

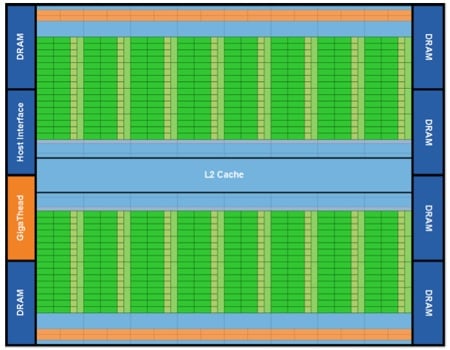

While the new chip is dubbed 'Fermi', so is the architecture that connects a multitude of what Nvidia calls a "Streaming Multiprocessor". The SM design the company outlined yesterday contains 32 basic Cuda cores - four times as many found in previous generations of SM - each comprising one integer and one floating-point maths unit. It is able to schedule two groups of 32 threads - a group Nvidia calls a "warp" - at once.

Nvidia's Fermi: each of the 16 green strips is...

The networked cores connect to 64KB of shared L1 cache, also used by four Special Function Units (SFUs) which handle complex maths formulae such as sines and cosines.

Fermi itself packs in 16 SMs - that's 512 Cuda cores in total - which tap into shared 768KB L2 cache and can reach out to a maximum of 6GB of GDDR 5 memory over a 384-bit interface and with ECC support

This is only the first Firmi GPU design. It's aimed at science and engineering GPGPU apps rather than game graphics, so future Fermi-based GeForce chips will likely sport less complex layouts. GT300, Nvidia's next GPU core, will be derived from Fermi, but don't expect it to show off all the superlatives Nvidia has been claiming for the Fermi chip.

...one of these 32-core Stream Multiprocessors

Just imagining the next generation of this type of system that could be around as soon as next year. Think of a quad eight-core Xeon with four 32-core Larrabee PCIe cards (each Xeon running one card). That’s about 8.5 TFLOPs in a 3U using off-the-shelf parts, nothing customized. You could hit a petaFLOP in nine racks.

Yes, I know technically some video cards can easily beat this, but the range of problems they can be programmed to solve is much more limited (I learned that from Folding@Home).

See link at #12.

bump

That’s nice... but can it run a Java app? </sarc>

Right now I’m more interested in Larrabee. The biggest thing for me is that the Larrabee is full of general-purpose chips. It’s not just a huge collection of floating point units.

Disclaimer: Opinions posted on Free Republic are those of the individual posters and do not necessarily represent the opinion of Free Republic or its management. All materials posted herein are protected by copyright law and the exemption for fair use of copyrighted works.