Posted on 10/01/2009 9:17:53 AM PDT by Ernest_at_the_Beach

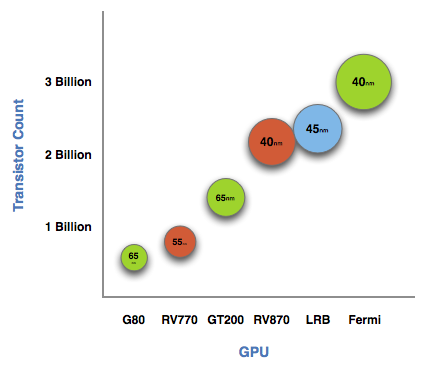

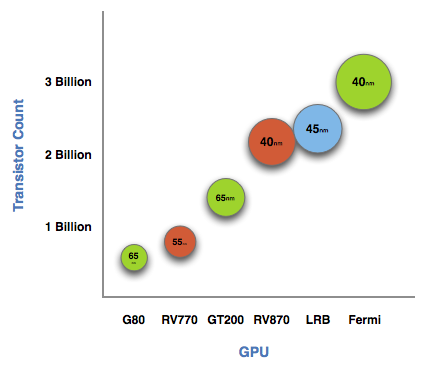

The graph below is one of transistor count, not die size. Inevitably, on the same manufacturing process, a significantly higher transistor count translates into a larger die size. But for the purposes of this article, all I need to show you is a representation of transistor count.

See that big circle on the right? That's Fermi. NVIDIA's next-generation architecture.

NVIDIA astonished us with GT200 tipping the scales at 1.4 billion transistors. Fermi is more than twice that at 3 billion. And literally, that's what Fermi is - more than twice a GT200.

At the high level the specs are simple. Fermi has a 384-bit GDDR5 memory interface and 512 cores. That's more than twice the processing power of GT200 but, just like RV870 (Cypress), it's not twice the memory bandwidth.

(Excerpt) Read more at anandtech.com ...

fyi

Whoa.

>>Fermi has a 384-bit GDDR5 memory interface and 512 cores. That’s more than twice the processing power of GT200 but, just like RV870 (Cypress), it’s not twice the memory bandwidth.<<

And Vista will still be a dog on it.

Sort of brings up the question, if something sucks with a lot of power, does it blow?

***********************EXCERPT***************************

When matched with the right algorithms and programming efforts, GPU computing can provide some real speedups. Much of Fermi's architecture is designed to improve performance in these HPC and other GPU compute applications.

Ever since G80, NVIDIA has been on this path to bring GPU computing to reality. I rarely get the opportunity to get a non-marketing answer out of NVIDIA, but in talking to Jonah Alben (VP of GPU Engineering) I had an unusually frank discussion.

From the outside, G80 looks to be a GPU architected for compute. Internally, NVIDIA viewed it as an opportunistic way to enable more general purpose computing on its GPUs. The transition to a unified shader architecture gave NVIDIA the chance to, relatively easily, turn G80 into more than just a GPU. NVIDIA viewed GPU computing as a future strength for the company, so G80 led a dual life. Awesome graphics chip by day, the foundation for CUDA by night.

I’ve got a GTS250 on one machine and a GTX280 on another. Both cards rock. The GTX280 also works well as a space heater.

Can I put a plug in for FR’s Folding Team? http://www.freerepublic.com/focus/f-chat/2316710/posts .....C

To describe VISTA I will quote Bart Simpson “I didnt think it was physically possible, but this sucks and blows at the same time”

same for Microsoft Office with the new ‘ribbon’ bar that takes up 1/3 of your screen with commands you never use, and moves all the commands somewhere that you can’t find them

Open Office user here...but usually just the Text Editor on Ubuntu.

>>Windows 7 out this month.<<

IIRC, there was an article posted here that Best Buy was told/told its employees “do NOT call Windows 7 ‘Vista that works.’”

LOL.

Thanks... if some of those Graphic cards get reasonable prices I might join in.

Now that’s funny!

A GeForce GTX 280 with 4GB of memory is the foundation for the Tesla C1060 cards

And then:

Four of those C1060 cards in a 1U chassis make the Tesla S1070. PCIe connects the S1070 to the host server.

1U??? Holy Moley!

How long before it fits into a PDA?

***************************EXCERPT***************************

NVIDIA loves to cite examples of where algorithms ported to GPUs work so much better than CPUs. One such example is a seismic processing application that HESS found ran very well on NVIDIA GPUs. It migrated a cluster of 2000 servers to 32 Tesla S1070s, bringing total costs down from $8M to $400K, and total power from 1200kW down to 45kW.

Disclaimer: Opinions posted on Free Republic are those of the individual posters and do not necessarily represent the opinion of Free Republic or its management. All materials posted herein are protected by copyright law and the exemption for fair use of copyrighted works.