From designing logic to designing minds

Software developers are builders at heart. They build logical links, algorithms, programs, projects, and more. The point is: they build logical stuff.

With the rise of artificial intelligence, we’re seeing a paradigm shift though. Developers aren’t designing logical links anymore. Instead, they’re training models on the heuristic of these logical links.

Many developers have gone from building logic to building minds. To put it differently, more and more software developers are taking on the activities of data scientists.

The three levels of automation

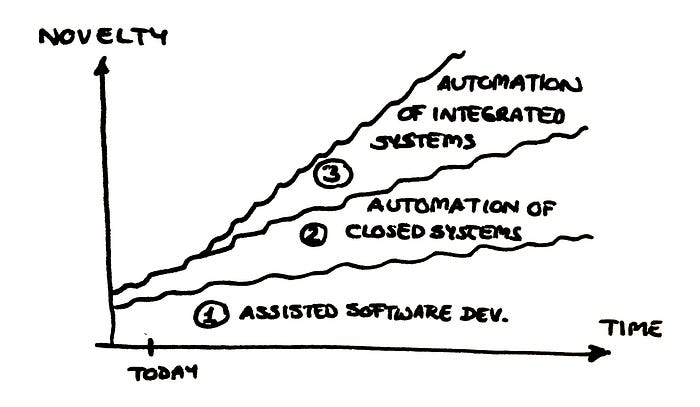

If you’ve ever used an IDE, then you know how amazing assisted software development can be. Once you’ve gotten used to features like autocomplete or semantic code search, you don’t want to go without them again.

This is the first area of automation in software development. As machines understand what you’re trying to implement, they can help you through the process.

The second area is that of closed systems. Consider a social media app: it consists of many different pages that are linked among each other. However, it’s closed insofar as it isn’t designed to directly communicate with another service.

Although the technology for building such an app is getting more and more easy to use, we can’t speak of real automation yet. As of now, you need to be able to code if you want to create dynamic pages, use variables, apply security rules, or integrate databases.

The third and last area is that of integrated systems. The API of a bank, for example, is such a system since it is built to communicate with other services. At this point in time, however, it’s pretty impossible to automate ATM integrations, communications, world models, deep security, and complex troubleshooting issues.

The three areas of automation. Image by the author, but adapted from Emil Wallner’s talk at InfoQ. Software development is a bumpy road, and we don’t really know when the future will arrive.

The world through a computer’s eyes

When asked whether they’ll be replaced by a robot in the future, human workers often don’t think so. This applies to software development as well as many other areas.

Their reason is clear: qualities like creativity, empathy, collaboration, or critical thinking are not what computers are good at.

But often, that’s not what matters to get a job done. Even the most complex projects consist of many small parts that can be automated. DeepMind scientist Richard S. Sutton puts it like this:

“Researchers seek to leverage their human knowledge of the domain, but the only thing that matters in the long run is the leveraging of computation.”

Don’t get me wrong; human qualities are amazing. But we’ve been overestimating the importance of these problems when it comes to regular tasks. For a long time, for example, even researchers believed that machines would never be able to recognize a cat on a photo.

Nowadays, a single machine can categorize billions of photos at a time, and with a greater accuracy than a human. While a machine might be unable to marvel at the cuteness of a little cat, it’s excellent at working with undefined states. That’s what a photo of a kitten is through a machine’s eyes: an undefined state.

Towards new manifolds and scales

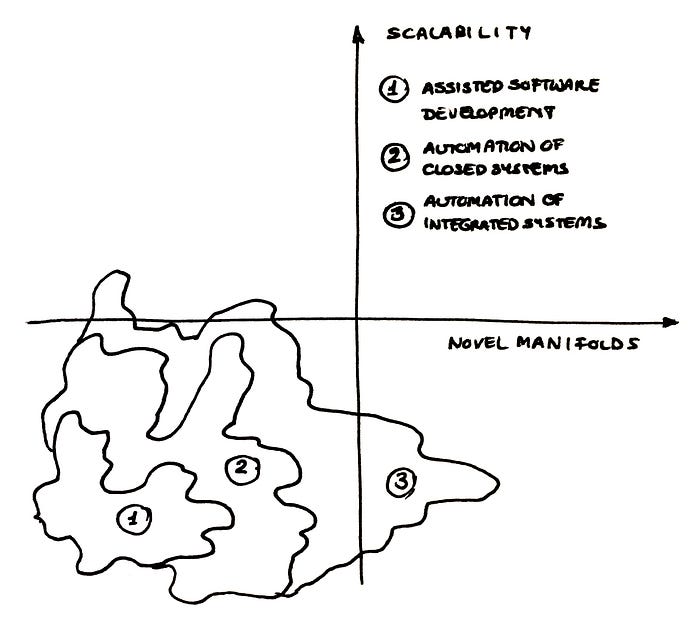

In addition to working with undefined states, there are two other things that computers can do more efficiently than humans: firstly, doing things at a scale. Secondly, working on novel manifolds.

We’ve all experienced how well computers work at a scale. For example, if you ask a computer to print("I am so stupid") two-hundred times, it will do so without complaining, and complete the task in a fraction of a second. Ask a human, and you’ll need to wait for hours to get the job done…

Manifolds are basically a fancy, or mathematical, way of referring to subsets of space that share particular properties. For example, if you take a piece of paper, that’s a two-dimensional manifold in three-dimensional space. If you scrunch up the piece of paper or fold it to a plane, it’s still a manifold.

It turns out that computers are really good at working in manifolds that humans find hard to visualize, for example because they extend into twenty dimensions or have lots of complicated kinks and edges. Since many everyday problems, like human language or computer code, can be expressed as a mathematical manifold, there is a lot of potential to deploy really efficient products in the future.

Where we are in terms of computer scalability and the exploration of novel manifolds. We’re working on areas one and two, but have barely touched area number three. Image by the author, but adapted from Emil Wallner’s talk at InfoQ.

The status quo

Current developments

It might seem like developers are already using a lot of automations. But we’re only at the cusp of software automation. Automating integrated systems is almost impossible as of today. But other areas are already being automated.

For one, code reviews and debugging might soon be a thing of the past. Swiss company DeepCode is working on a tool for automatic bug identification. Google’s DeepMind can already recommend more elegant solutions for existing code. And Facebook’s Aroma can autocomplete small programs on its own.

What’s more, the Machine Inferred Code Similarity System, short MISIM, claims to be able to understand computer code in the same way that Alexa or Siri can understand human language. This is exciting because such a system could allow developers to automate common and time-consuming tasks, such as pushing code to the cloud or implementing compliance processes.

Exciting horizons

So far, all these automations work great on small projects, but are quite useless on more complex ones. For example, bug identification software is still returning many false positives, and autocompletion doesn’t work if the project has a very novel goal.

Since MISIM hasn’t been around for a long time, the jury is still out on this automation. However, you’ll need to keep in mind that these are the very beginnings, and these tools are expected to become a lot more powerful in the future.

Soon-to-come applications

Some early applications of these new automations could include tracking human activity. This isn’t meant like a spy-software, of course; rather, things like scheduling the hours of a worker or individualizing the lessons for a student could be optimized this way.

This, in itself, presents huge economic opportunities because students could learn the important stuff faster, and workers could serve during the hours in which they happen to be more productive.

If MISIM is as good as it promises, it could also be used to rewrite legacy code. For example, lots of banking and government software is written in COBOL, which is hardly taught today. Translating this code into a newer language would make it easier to maintain.

Being a software developer will remain exciting for a long time to come. Photo by Brooke Cagle on Unsplash

How developers and corporations can stay ahead of the curve

All these new applications are exciting. But above them looms a big Damocles’ sword: what if the competition makes use of those automations before you catch on? What if they make developers totally obsolete?

Investing in continuous delivery and automated testing

These are certainly two buzzwords in the world of automation. But they’re important nevertheless.

If you don’t test your software before releases, you might be compromising the user experience or encounter security issues down the road. And experience shows that automated testing covers cases that human testers didn’t even think of although they might have been crucial.

Continuous delivery is a practise that more and more teams are picking up, and for good reason. When you bundle lots and lots of features and only release an update, say, once every three months, you often spend the next few months fixing everything that got broken in the process. Not only is this way of working a big hindrance for speedy development, it also compromises the user experience.

There’s plenty of automation software for testing, and there’s version control (and many other frameworks) for continuous delivery. In most cases, it seems better to pay for these automations than to build them yourself. After all, your developers were hired to build new projects, not to automate boring tasks.

If you’re a manager, consider these purchases an investment. By doing so, you’re supporting your developers the best you can because you’re capitalizing on what they’re really good at.

The left shift: including developers in the early stages of every project

Oftentimes, projects get created somewhere in upper management or close to the R&D-team, and then get passed down until they reach the development team — which then has the task of making this project idea real.

However, since not every project manager is also a seasoned software engineer, some parts of the project might be implementable by the development team, while others would be costly or pretty much impossible.

That approach may have been legitimate in the past. But as lots of the monotonous parts of software development — yes, those parts exist! — are being automated, developers are getting a chance to get more and more creative.

This is an excellent chance to move developers left, i.e., involving them in the planning stages of a project. Not only to they know what can be implemented and what can’t. With their creativity, they might add value in ways that are not imaginable a priori.

Make software a top priority

It’s been a brief five years since Microsoft’s Satya Nadella proclaimed that “every business will be a software business”. He was right.

Not only should developers shift left in management. Software should shift up in priorities.

If the current pandemic taught you anything, then it is that much of life, and value creation, happens online these days.

Software is king. Paradoxically, this becomes more apparent the more of it gets automated.

The bottom line: geeks are becoming leaders

When I was at school, people who liked computers were deemed unsociable kids, nerds, geeks, unlikeable creatures, and zombie-like beings devoid of human feelings and passions. I really wish I were exaggerating.

The more time is progressing, however, the more people are seeing the other sides of developers. People who code are not regarded as nerds any more, but rather as smart folks who can build cool stuff.

Software is gaining more power the more it’s being automated. In that sense, your fear of losing your developer job due to automation is largely unfounded.

Sure, in a decade — in a few months even — you’ll probably be doing things that you can’t even imagine right now. But that doesn’t mean that your job will go away. Rather, it will be upgraded.

The fear that you really need to conquer is not that you might lose your job. What you need to shake off is the fear of the unknown.

Developers, you won’t be obsolete. You just won’t be nerds that much longer. Rather, you’ll become leaders.