It’s fact.

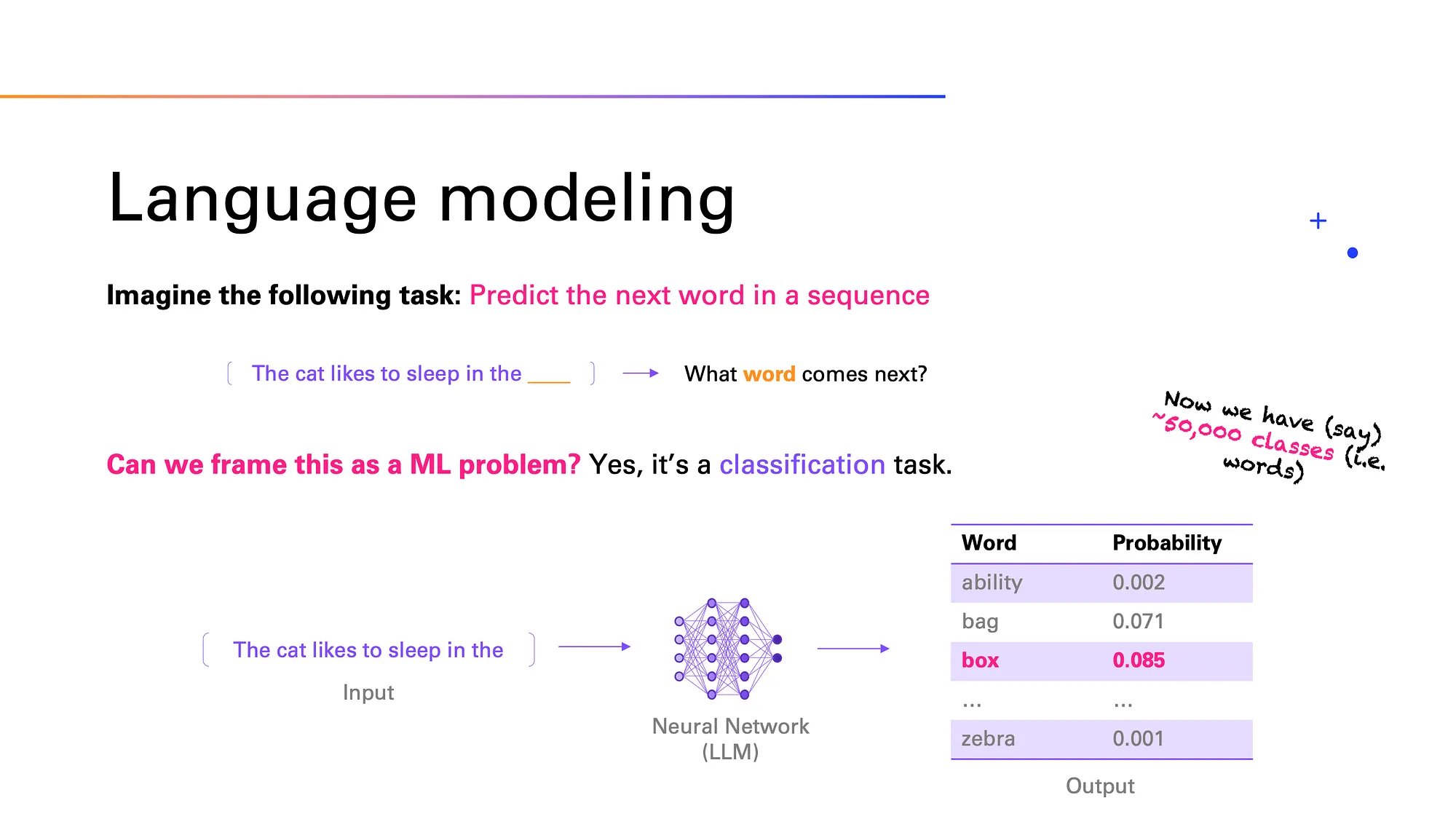

Please see https://medium.com/data-science-at-microsoft/how-large-language-models-work-91c362f5b78f and specifically this image:

Again, this is on a VERY basic level. Most commercial LLMs have billions of parameters build on a corpus of the internet or subsamples.

In the end, it’s a lot of matrix algebra, weights, and regression functions. It’s not magic, but the hipsters, VC reps, and tech bros would like us to think otherwise.