Skip to comments.

Big Blue supers crunch kaon decay

The Register ^

| 28th May 2012 00:14 GMT

| Richard Chirgwin

Posted on 05/28/2012 8:00:37 AM PDT by Ernest_at_the_Beach

Looking at the fundamental properties of matter can take some serious computing grunt.

Take the calculation needed to help understand kaon decay – a subatomic particle interaction that helps explain why the universe is made of matter rather than anti-matter: it soaked up 54 million processor hours on Argonne National Laboratory’s BlueGene/P supercomputer near Chicago, along with time on Columbia University’s QCDOC machine, Fermi National Lab’s USQCD (the US Center for Quantum Chromo-Dynamic) Ds cluster, and the UK’s Iridis cluster at the University of Southampton and the DIRAC facility.

The reason so much iron was needed: the kaon decay spans 18 orders of magnitude, which this Physorg article describes as akin to the size difference between “a single bacterium and the size of our entire solar system”. At the smallest scale, the decays measured in the experiment were 1/1000th of a femtometer.

“The actual kaon decay described by the calculation spans distance scales of nearly 18 orders of magnitude, from the shortest distances of one thousandth of a femtometer — far below the size of an atom, within which one type of quark decays into another — to the everyday scale of meters over which the decay is observed in the lab,” Brookhaven explains in its late March release.

Back in 1964, a Nobel-winning Brookhaven experiment observed CP (charge parity) violation, setting up a long-running mystery in physics that remains unsolved.

“The present calculation is a major step forward in a new kind of stringent checking of the Standard Model of particle physics — the theory that describes the fundamental particles of matter and their interactions — and how it relates to the problem of matter/antimatter asymmetry, one of the most profound questions in science today,” said Taku Izubuchi of the RIKEN BNL Research Center and BNL, a member of the research team hat published their findings in Physical Review Letters.

The research is seeking to quantify how much the kaon decay process departs from Standard Model predictions. This “unknown quantity” will then be hunted in calculations in the next generation of IBM supercomputers, BlueGene/Q. ®

TOPICS: Computers/Internet; Science

KEYWORDS: hitech; kaondecay; stringtheory

Navigation: use the links below to view more comments.

first 1-20, 21-29 next last

To: All

Some more interesting stuff ....on the computer:

*********************************************

IBM's BlueGene/Q super chip grows 18th core

*******************************EXCERPTS*******************************************

By Timothy Prickett Morgan

22nd August 2011 17:00 GMT

It's nice to have a spare

Hot Chips The mystery surrounding the number of cores in the 64-bit Power processor that will be at the heart of the 20 petaflops "Sequoia" BlueGene/Q supercomputer has been finally cleared up.

Back at the SC10 supercomputing conference in November 2010, a software engineer working on the BlueGene/Q system told El Reg that the processor module at the heart of the system would have 17 cores: one to run the Linux kernel and the 16 others to perform mathematical calculations. IBM also said at the time that this chip would be a variant of the PowerA2 "wirespeed" processor, but geared down to 1.6GHz from its 2.3GHz design speed.

In February 2011, when Argonne National Laboratory said that it was going to take a 10 petaflop super based on the BlueGene/Q design (basically half of the Sequoia machine that is going into Lawrence Livermore National Laboratory), IBM told El Reg that it was just a 16-core chip, nothing funky.

For whatever reason, neither turns out to be true. The BlueGene/Q processor, the company revealed at the Hot Chips conference at Stanford University late last week, actually has 18 cores: 16 cores for doing work, one core for running Linux services, and a spare that is intended to merely increase the yield that IBM Microelectronics can get out of its chip fabs but which can, according to George Chiu, senior manager of advanced high performance systems at IBM, be activated and used in the system, in theory.

Chiu was very clear, however, that he was not making any promises that this 18th core would be used as a hot spare in any BlueGene/Q supers, but merely that the capability is there.

Big Blue detail

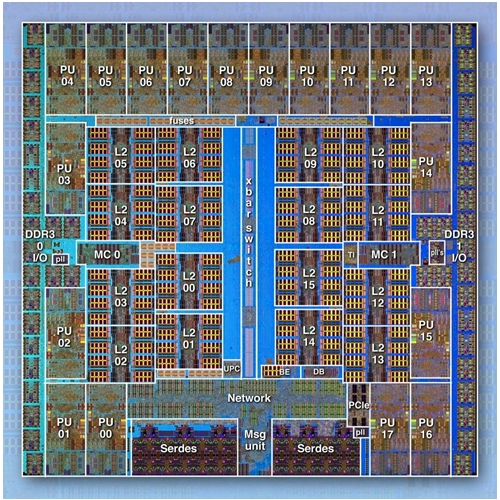

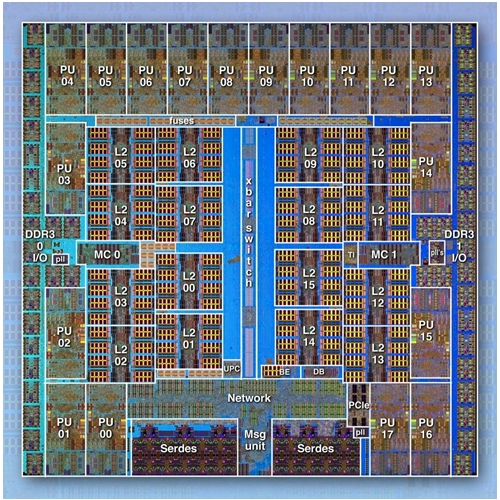

IBM gave out a lot more detail on the BlueGene/Q processor at Hot Chips, and Chiu walked El Reg through the details. The chip looks like this:

The BlueGene/Q custom Power processor

Like other processor designs these days, the BlueGene/Q processor is an example of a system-on-a-chip design, which tries to cram as many components of the system board onto the chip. The BlueGeneQ processor is based on the Power A2 core that IBM created for networking devices and experimentation, and this is the block diagram of the core:

2

posted on

05/28/2012 8:08:28 AM PDT

by

Ernest_at_the_Beach

(The Global Warming Hoax was a Criminal Act....where is Al Gore?)

To: All

See the link at post #2 for much more detail....

Now about the kao Decay...

Kaons Technicals

3

posted on

05/28/2012 8:16:23 AM PDT

by

Ernest_at_the_Beach

(The Global Warming Hoax was a Criminal Act....where is Al Gore?)

To: All

4

posted on

05/28/2012 8:18:51 AM PDT

by

Ernest_at_the_Beach

(The Global Warming Hoax was a Criminal Act....where is Al Gore?)

To: All

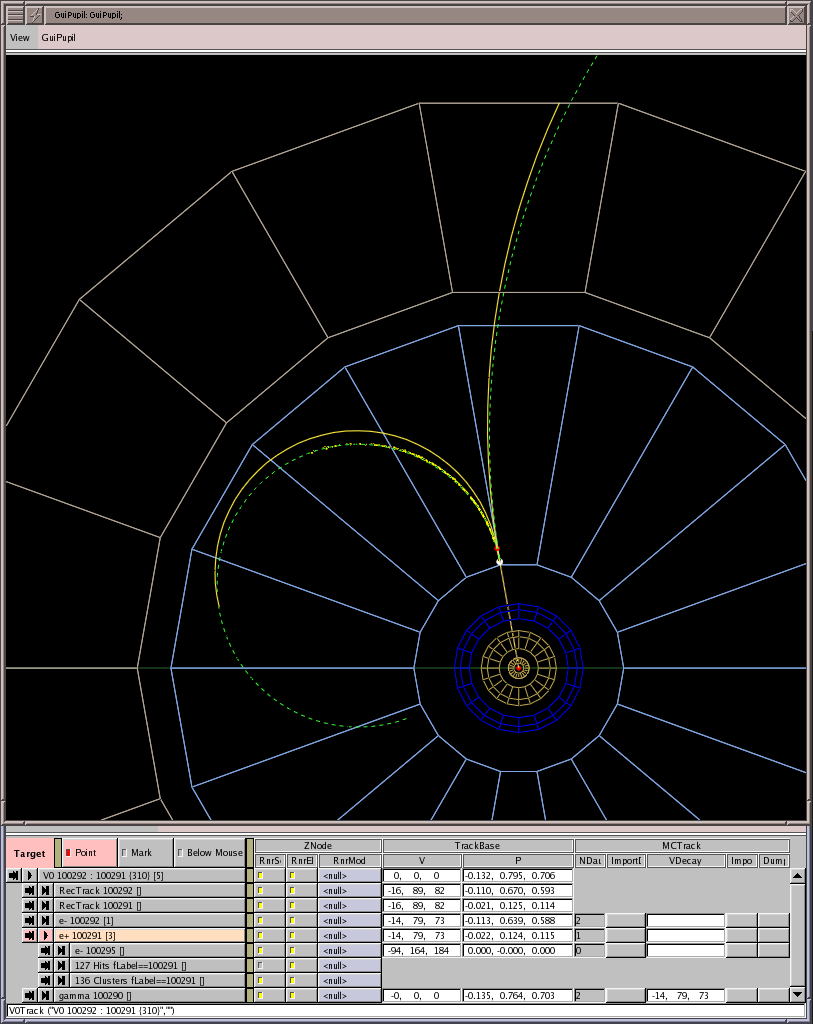

kaon_decay_bub_big.jpg

Positive kaon (K+) decay in bubble chamber

5

posted on

05/28/2012 8:23:11 AM PDT

by

Ernest_at_the_Beach

(The Global Warming Hoax was a Criminal Act....where is Al Gore?)

To: Ernest_at_the_Beach

the processor module at the heart of the system would have 17 cores: one to run the Linux kernel and the 16 others to perform mathematical calculations.Or all 18 to be used to run Windows 9 home edition.

Mark

6

posted on

05/28/2012 8:27:54 AM PDT

by

MarkL

(Do I really look like a guy with a plan?)

To: All

7

posted on

05/28/2012 8:29:02 AM PDT

by

Ernest_at_the_Beach

(The Global Warming Hoax was a Criminal Act....where is Al Gore?)

To: All

I have no idea of the meaning of the image at post #7.

Just thought it was neat.

8

posted on

05/28/2012 8:31:19 AM PDT

by

Ernest_at_the_Beach

(The Global Warming Hoax was a Criminal Act....where is Al Gore?)

To: MarkL; ShadowAce; SunkenCiv; Marine_Uncle; TigersEye; NormsRevenge; SierraWasp

one of the cores will run Linux .

And this computer power wont't be used for Global Warming Modeling....wastful use of serious compute power.

Some computationl Chemistry scientists would like one of these machines.

9

posted on

05/28/2012 8:37:26 AM PDT

by

Ernest_at_the_Beach

(The Global Warming Hoax was a Criminal Act....where is Al Gore?)

To: All

10

posted on

05/28/2012 8:42:50 AM PDT

by

Ernest_at_the_Beach

(The Global Warming Hoax was a Criminal Act....where is Al Gore?)

**********************************************EXCERPT*************************************

Professor Jim McCluskey, Deputy Vice-Chancellor (Research) at the University of Melbourne, said the machine’s gigantic capacity would assist life sciences researchers to fast track solutions to some of the most debilitating health conditions.

“Through this supercomputer, scientists will be able to advance their work in finding cures and developing improved treatments for cancer, epilepsy and other devastating diseases affecting the lives of Australians and people worldwide,” he said.

IBM pitches the water-cooled BlueGene/Q as a “green” supercomputer, typically only sucking down 80 kW per rack, with each rack able to kick out 209.7 TFlops. ®

11

posted on

05/28/2012 8:46:30 AM PDT

by

Ernest_at_the_Beach

(The Global Warming Hoax was a Criminal Act....where is Al Gore?)

To: All

B>

IBM unveils BlueGene/Q at SC11************************EXCERPT**************************************

IBM unveils BlueGene/Q at SC11

Kim Cupps, leader of the high performance computing division, talks to reporters following the unveiling of Blue Gene/Q (the cabinet to her left) at SC11 in Seattle.

Kim Cupps, leader of the high performance computing division, talks to reporters following the unveiling of Blue Gene/Q (the cabinet to her left) at SC11 in Seattle.

Photo credit: Wayne Butman/LLNL

12

posted on

05/28/2012 9:07:31 AM PDT

by

Ernest_at_the_Beach

(The Global Warming Hoax was a Criminal Act....where is Al Gore?)

To: All

AND:

Femtometre

****************************************EXCERPTS****************************************

From Wikipedia, the free encyclopedia

For examples of things measuring between one and ten femtometres, see

1 femtometre.

The femtometre (American spelling femtometer, symbol fm) (Danish: femten, "fifteen"; Ancient Greek: μέτρον, metrοn, "unit of measurement") is an SI unit of length equal to 10-15 metres. This distance can also be called fermi and was so named in honour of Enrico Fermi and is often encountered in nuclear physics as a characteristic of this scale. The symbol for the fermi is also fm[1][2][3].

[edit] Definition

1 femtometre = 1.0 x 10−15 metres = 1 fermi = 0.001 picometre = 1000 attometres

1,000,000 femtometres = 1 nanometre.

For an example of lengths in this unit, the diameter of a gold nucleus is approximately 8.45 femtometres.[citation needed]

[edit] History

The femtometre was adopted by the 11th Conférence Générale des Poids et Mesures, and added to SI in 1964.

The fermi is named after the Italian physicist Enrico Fermi (1901–1954), one of the founders of nuclear physics. The term was coined by Robert Hofstadter in a 1956 paper published in the Reviews of Modern Physics journal, "Electron Scattering and Nuclear Structure"[4]. The term is widely used by nuclear and particle physicists. When Hofstadter was awarded the 1961 Nobel Prize in Physics, it subsequently appears in the text of his 1961 Nobel Lecture, "The electron-scattering method and its application to the structure of nuclei and nucleons" (December 11, 1961).[5]

13

posted on

05/28/2012 9:14:52 AM PDT

by

Ernest_at_the_Beach

(The Global Warming Hoax was a Criminal Act....where is Al Gore?)

To: All

14

posted on

05/28/2012 9:19:14 AM PDT

by

Ernest_at_the_Beach

(The Global Warming Hoax was a Criminal Act....where is Al Gore?)

To: Ernest_at_the_Beach; AdmSmith; bvw; callisto; ckilmer; dandelion; ganeshpuri89; gobucks; ...

15

posted on

05/28/2012 9:20:23 AM PDT

by

SunkenCiv

(FReepathon 2Q time -- https://secure.freerepublic.com/donate/)

Abstract

This IBM® Redbooks® publication is one in a series of IBM books written specifically for the

IBM System Blue Gene® supercomputer, Blue Gene/Q®, which is the third generation of

massively parallel supercomputers from IBM in the Blue Gene series. This document

provides an overview of the application development environment for the Blue Gene/Q

system. It describes the requirements to develop applications on this high-performance

supercomputer.

This book explains the unique Blue Gene/Q programming environment. This book does not

provide detailed descriptions of the technologies that are commonly used in the

supercomputing industry, such as Message Passing Interface (MPI) and Open

Multi-Processing (OpenMP). References to more detailed information about programming

and technology are provided.

This document assumes that readers have a strong background in high-performance

computing (HPC) programming. The high-level programming languages that are used

throughout this book are C/C++ and Fortran95. For more information about the Blue Gene/Q

system, see “IBM Redbooks” on page 133.

16

posted on

05/28/2012 9:21:13 AM PDT

by

Ernest_at_the_Beach

(The Global Warming Hoax was a Criminal Act....where is Al Gore?)

To: All

17

posted on

05/28/2012 9:33:50 AM PDT

by

Ernest_at_the_Beach

(The Global Warming Hoax was a Criminal Act....where is Al Gore?)

With four times more power than the next competitor, Japan’s K Computer (and Linux) remains at the top of the TOP500 List.

18

posted on

05/28/2012 9:44:51 AM PDT

by

Ernest_at_the_Beach

(The Global Warming Hoax was a Criminal Act....where is Al Gore?)

To: MarkL; SunkenCiv

From the link at post #17:

*******************************EXCERPT*****************************************

Windows in Decline

Linux has dominated the list so long, it's not even broken out in the statistics when TOP500 lists are announced. With the November 2011 list, Linux holds steady at 457 of the 500. That's right – 91.4% of the top 500 supercomputers in the world are Linux-based.

Unix has picked up a few systems, though. This time around 30 of the top 500 are UNIX-based, and 11 are of "mixed" operating systems. Windows, however, took a major hit. In June, Windows had a mere 4 systems on the list. This time around, Microsoft Windows is clinging to the TOP500 with one system.

Linux has come a long way in the last 10 years. If you look at the November 2001 list, Linux accounted for only 39 of the TOP500. Unix held 443, and Windows? Well, Microsoft had just one lonely system then too.

19

posted on

05/28/2012 9:51:45 AM PDT

by

Ernest_at_the_Beach

(The Global Warming Hoax was a Criminal Act....where is Al Gore?)

To: All

I am posting all of this with a new Distro I am trying out....as a replacement for Ubunity with Unity and Mint....

Name is Fuduntu....64 bit variety.

There seem to be a few issues...on my Llano A6-3650 system tho...

20

posted on

05/28/2012 10:01:50 AM PDT

by

Ernest_at_the_Beach

(The Global Warming Hoax was a Criminal Act....where is Al Gore?)

Navigation: use the links below to view more comments.

first 1-20, 21-29 next last

Disclaimer:

Opinions posted on Free Republic are those of the individual

posters and do not necessarily represent the opinion of Free Republic or its

management. All materials posted herein are protected by copyright law and the

exemption for fair use of copyrighted works.

FreeRepublic.com is powered by software copyright 2000-2008 John Robinson