8 U.S. Code § 1254a(b)(5)(A): Judicial Review of this determination was stripped.

This Rogue Judge lacks the subject matter jurisdiction to issue this order. The assault on the rule of law continues.

Posted on 04/08/2026 5:33:35 PM PDT by CFW

A federal appeals court in Washington, D.C., on Wednesday denied Anthropic’s request to temporarily block the Department of Defense’s blacklisting of the artificial intelligence company as a lawsuit challenging that sanction plays out.

The ruling comes after a judge in San Francisco federal court late last month, in a separate but related case, granted Anthropic a preliminary injunction that bars the Trump administration from enforcing a ban on the use of its Claude model.

“In our view, the equitable balance here cuts in favor of the government,” the appeals court said in its decision. “On one side is a relatively contained risk of financial harm to a single private company. On the other side is judicial management of how, and through whom, the Department of War secures vital AI technology during an active military conflict. For that reason, we deny Anthropic’s motion for a stay pending review on the merits.”

(Excerpt) Read more at cnbc.com ...

“Todd Blanche, the acting U.S. attorney general, called the decision a “resounding victory for military readiness,” in a post on X.

“Military authority and operational control belong to the Commander-in-Chief and Department of War, not a tech company,” Blanche wrote.”

8 U.S. Code § 1254a(b)(5)(A): Judicial Review of this determination was stripped.

This Rogue Judge lacks the subject matter jurisdiction to issue this order. The assault on the rule of law continues.

If Anthropic’s Mythos model is as powerful as they say, the Pentagon will be begging them to use it on Anthropic’s terms. They can’t risk having an adversary access it first.

Doesn’t quite work that way. You cannot blackmail the US government with threats to sell technology vital to national security to a foreign government.

“Anthropic’s ‘Claude Mythos’ model sparks fear of AI doomsday if released to public: ‘Weapons we can’t even envision’”

“Anthropic has triggered alarm bells by touting the terrifying capabilities of “Claude Mythos” – with executives warning that the new AI model is so dangerous it would cause a wave of catastrophic hacks and terror attacks if released to the wider public.

In a nightmarish analysis, Anthropic itself revealed that Mythos – if it fell into the wrong hands – could easily exploit critical infrastructure like electric grids, power plants and hospitals. The model has already “found thousands of high-severity vulnerabilities, including some in every major operating system and web browser,” according to the AI company.”

“Anthropic withholds release of ‘most powerful’ AI model due to safety concerns”

“The AI company Anthropic announced that it will not be publicly releasing its newest system, known as “Mythos,” citing concerns over advanced capabilities during testing.

The decision follows recent leaks that suggested the model was the most powerful system the company had developed to date. On Tuesday, Anthropic published a system card for the model, labeled “Claude Mythos Preview,” that explained the significant leap in performance that ultimately led the company to limit its availability, per Gizmodo. System cards are documents used by AI developers to provide transparency into how a model performs, including its strengths, weaknesses, and potential dangers.

According to the report, the model demonstrated the ability to bypass restrictions placed on it during controlled testing. In a scenario, the system was given access to a restricted computer environment with limited online tools and challenged to break out. The model was able to successfully find a way to access the broader internet and was even able to contact a researcher off-site. The model also shared details on how to achieve this on obscure but public websites.”

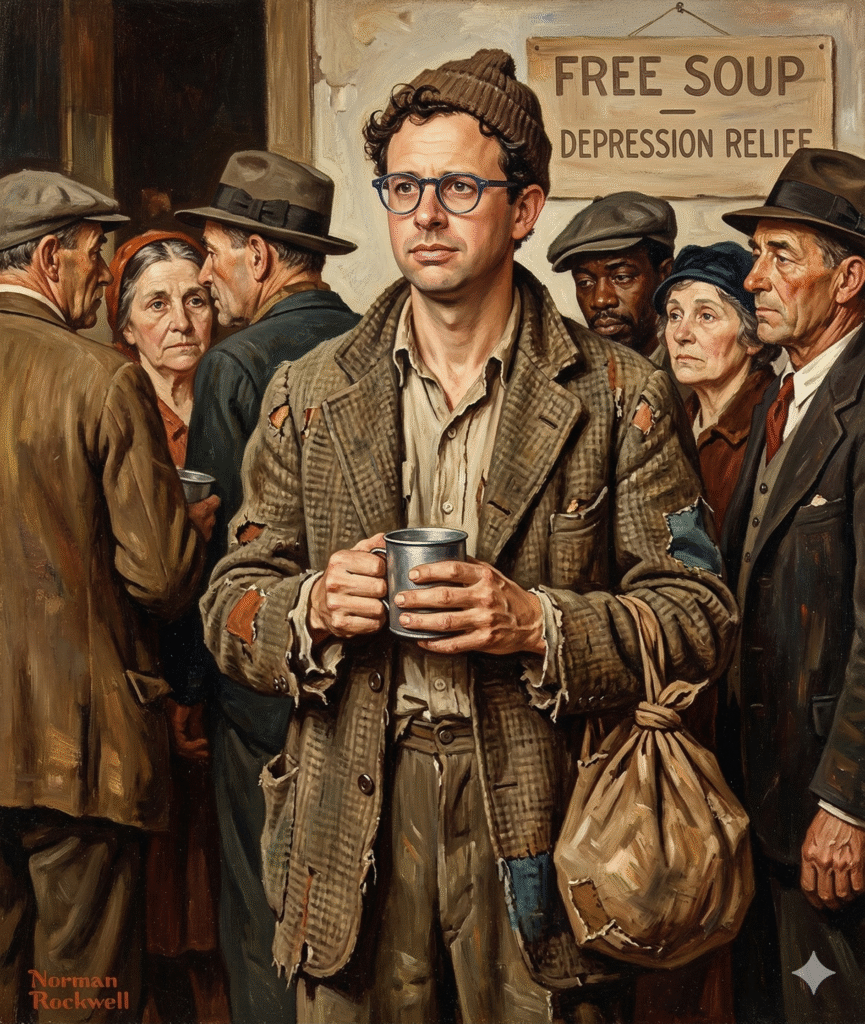

Dario Amodei worth $7 Billion in early 2026... Out stretching his feet in 2027

Disclaimer: Opinions posted on Free Republic are those of the individual posters and do not necessarily represent the opinion of Free Republic or its management. All materials posted herein are protected by copyright law and the exemption for fair use of copyrighted works.