Posted on 05/09/2018 9:43:07 AM PDT by dayglored

I was cranking out the FORTRAN on PCP-11.

In 1983, I used an RS-2732 (modem) board to send a data file to a sister location in Salt Lake over the phone. Ekthelthior!

Bah, people should just use VI like a real many pro ... what?

Then again, for the truly manly, Ed is the standard!

https://www.gnu.org/fun/jokes/ed-msg.html

hahaha

Uh, no. Notepad is not 'fixed'. One particular obnoxious issue with notepad is fixed. Try bring a 20+MB text file into notepad. It wants to load the entire file in memory, rather than what it actually needs.

The compatibility fix is welcome, but notepad is still the worst editor on the planet short of 'edlin'.

Note: Zeugma is a 'vi' fan.

Depends on whether you hit a vital component.

For example, if your hard disk setup is RAID1, and you hit one disk, no big deal, the other is still working for you.

OTOH, if you hit the CPU fan, the next time you powered it up, your CPU probably converted itself into a little pile of slag.

So, a CRLF did exactly what you'd think.

Of course, these days, nothing actually works like that. A LF advances to the beginning of the next line as opposed to just scrolling down one line.

Ummm, these days 20MB is nothing. I just now created a 24MB text file on my Win7 VM, loaded it into Notepad, which took about 3 seconds, and wrote it back out successfully (under 1 sec). When you have multiple GB of core, assuming the app's working set can handle the size, what's the problem with editing in-core?

> Zeugma is a 'vi' fan.

At present that's my favorite general purpose editor too, at least for system stuff and light programming. Mainly because every system has it (on Windows via Cygwin) so I can depend on it. I haven't used emacs on a regular basis in years. For other stuff on Windows I use TextPad, and Gedit on everything else.

And I still require my sysadmins to learn /bin/ed for those times when you're on a 80x24 console with a system that's got a failed data disk, and you're in a single-user shell trying to edit /etc/fstab to disable mounting the failed disk and make the system bootable, and even vi isn't working:

# mount -o remount,rw / # cd /etc # /bin/ed fstab 64 P *1 /dev/sda1 / ext4 defaults 0 1 * /dev/sdb1 /data ext4 defaults 0 1 *s@/@#/ #/dev/sdb1 /data ext4 defaults 0 1 *w 65 *qI know, I know, call me a wuss because of the 'P'. So I like a prompt, okay???

I go back to those old days, and wrote I/O routines for ASR33 TTYs that did exactly that. In PDP-8 assembler.

Came in handy a few years later when I was writing routines for a Diablo daisy-wheel printer that did underscoring by doing an explicit CR and then over-striking the line with the underscore character as needed.

Explicit LF wasn't nearly as common, which is why it made more sense for Unix to use it as the EOL/newline, leaving CR available for the over-strike capability.

Get Notepad ++

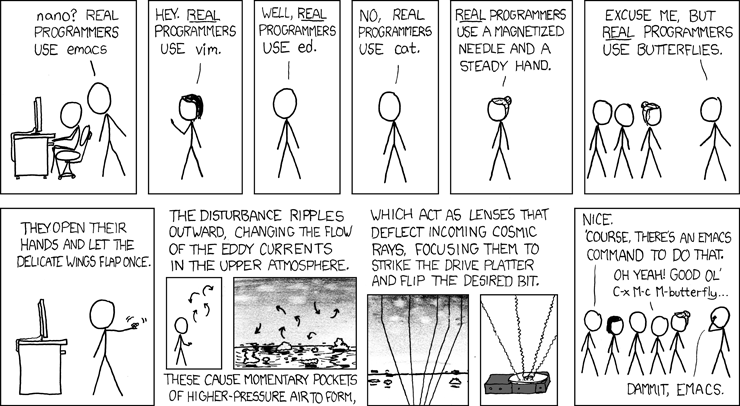

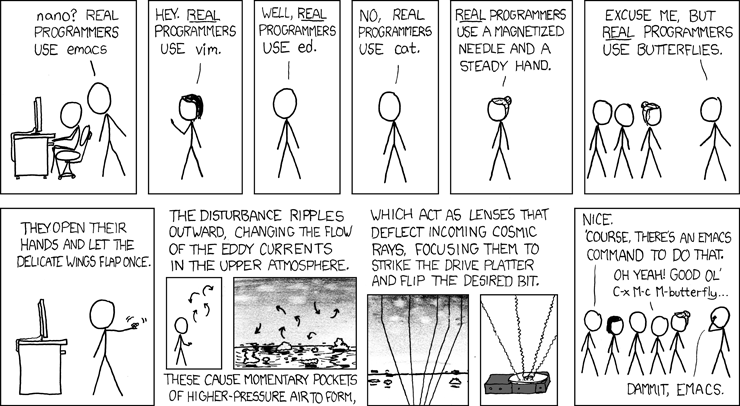

Hahaha ... XKCD, it figures :P

One of the greatest and often overlooked features of vi(m) is the macro facility. I use that almost every single day to do iterative changes over files where using sed would be unwise because the data isn't structured well enough.

As for the 20MB file, yeah you're right. That isn't really anything. bump that up be a couple of orders of magnitude and it will definitely cause issues. Of course, most normal people don't edit hundred meg text files. I'm apparently not normal. At all. Thankfully, I've automated all of the tasks that regularly require parsing of multi-GB logfiles.

Had a hunch that was what you were talking about. #MeToo, my bash history is littered with lines like:

cat foo.log | grep -vE '^foo|bar|woof$' | grep 'yes' | sed 's/^.*blah//; s/abc.*def/tweet/g' | sort -k2 | uniq >foo1.txt vi foo1.txtbecause

vi foo.logis insane on a 4GB logfile.

But then, that's why the wizards at Bell Labs in the '70s gave us grep and sed and sort... and I haven't even mentioned awk...

Neh I just shot the screen not the computer but I was thinking about just killing the computer but I work and play on the thing so it’s safe...for now!

Sounds interesting. I’m not computer savvy but sure do need this because I like writing so much.

But trying to find a free word pro which offers autopaste of every thing you copy, and auto save has been hard to find. Only the old but nifty Text Shield (replacement for Word Pad) does so, besides a few limited clipboard manages

sed 's/#/ /g' $FILE | awk '{print $6}' | sort | uniq -ic | sort -nr | sed 's/://g' > $FILE.hosts The above is used to process 5GB of logs daily. It takes that 5GB and turns it into something easily digestable in a few minutes. It is dirty, but it does the job. The input is a a bind log file. I take the top 20 lines off that file and I know the top 20 domains requested on the DNS server. I'll sometimes get a request from a business unit like "can you tell me how often 'foo.bar.com' has been requested? I'll run a quick for loop with an 'awk' against the .hosts files that adds columns from the various DNS servers, and can give them a daily count for the last month. The client is often surprised at how fast I can get the information put together. Gotta love that.

One of the nice changes I've seen in recent years is that even windows admins who used to sneer at shell scripts are waxing eloquent about the flexibility given them by powershell, but many still don't understand the power of what can really be accomplished with text files if you know what you're doing. I've asked for similar information to the type of request I mentioned above to our AD/DNS guys, and they just give you dumb looks. I end up having them zip up a few logs to send to me so I can dig it out myself.

Disclaimer: Opinions posted on Free Republic are those of the individual posters and do not necessarily represent the opinion of Free Republic or its management. All materials posted herein are protected by copyright law and the exemption for fair use of copyrighted works.